Scheduling Basics

General

A scheduler is software that controls a batch system. Such a batch system makes up the majority of a cluster (about 98%) and is the most powerful and power-consuming part. This is where the big applications are intended to run, where the computation requires a lot of memory and time for computation.

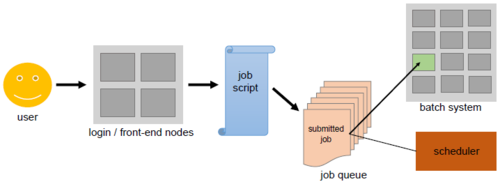

Such a batch system can be viewed as the counterpart of the login-nodes. A user can simply log in to those so-called "front-end" nodes and type in commands that are immediately executed on the same machine. A batch system, however, consists of "back-end" nodes that are not directly accessible for the user. In order to run an application on there, the user has to ask for time and memory resources and specify the application inside a jobscript.

This jobscript can be submitted to the batch system, that is, sending the jobscript from the front-end nodes to the back-end nodes. Any job will first be added to a queue. Based on the resources the job needs, the scheduler will decide when the job will leave the queue, and on which part of the back-end nodes it will run.

Be careful about the resources you request and know your system's limits: If you demand less time than your job actually needs to finish, the scheduler will simply kill the job once the given time is up. Or your job might be stuck in the queue forever, if you specify more memory than there is available on the system.

Purpose

Generally speaking, every scheduler has three main goals:

- minimize the time between the job submission and finishing the job: no job should stay in the queue for extensive periods of time

- optimize CPU utilization: the CPUs of the supercomputer are one of the core resources for a big application; therefore, there should only be few time slots where a CPU is not working

- maximize the job throughput: manage as many jobs per time unit as possible

Illustration

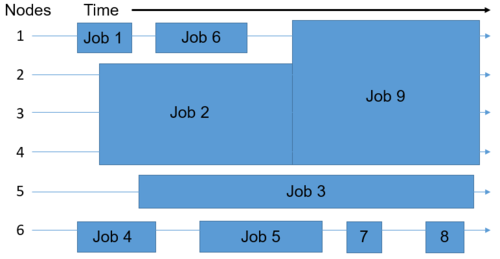

Assuming that the batch system you are using consists of 6 nodes, this is how the scheduler could place the nine jobs in the queue onto the available nodes. The goal is to eleminiate wasted resources, which can be identified on the right by looking at the free areas depicting nodes without any job execution on them. Therefore, the jobs may not be distributed among the nodes in the same order in which they first entered the queue. The space that a job takes up is determined by the time and number of nodes required for executing it.

Scheduling Algorithms

There two very basic strategies that schedulers can use to determine which job to run next. Note that modern schedulers do not stick strictly to just one of these algorithms, but rather employ a combination of the two. Besides, there are many more aspects a scheduler has to take into consideration, e. g. the current system load.

First Come, First Serve

Jobs are run in the exact same order in which they first enter the queue. The advantage is that every job will definitely be run, however, very tiny jobs might wait for an inadequately long time compared to their actual execution time.

Shortest Job First

Based on the execution time declared in the jobscript, the scheduler can estimate how long it will take to execute the job. Then, the jobs are ranked by that time from shortest to longest. While short jobs will start after a short waiting time, long running jobs (or at least jobs declared as such) might never actually start.

Backfilling

When backfilling the scheduler maintains the concept of "First Come, First Serve" without preventing long running jobs to execute. The scheduler checks whether the first job in the queue can be executed. If that is true, the job is executed without further delay. But if not, the scheduler goes through the rest of the queue to check whether another job can be executed without extending the waiting time of the first job in queue. If it finds such a job, the scheduler simply runs the job. Since jobs, which only need a few compute resources, are easily "backfillable", small jobs will usually encounter short queue times.