Introduction & Theory

| Tutorial | |

|---|---|

| Title: | Benchmarking & Scaling |

| Provider: | HPC.NRW

|

| Contact: | tutorials@hpc.nrw |

| Type: | Online |

| Topic Area: | Performance Analysis |

| License: | CC-BY-SA |

| Syllabus

| |

| 1. Introduction & Theory | |

| 2. Interactive Manual Benchmarking | |

| 3. Automated Benchmarking using a Job Script | |

| 4. Automated Benchmarking using JUBE | |

| 5. Plotting & Interpreting Results | |

Scalability

Often users who start running applications on an HPC system tend to assume the more resources (compute nodes / cores) they use, the faster their code will run (i.e. they expect a linear behaviour). Unfortunately this is not the case for the majority of applications. How fast a program runs with different amounts of resources is referred to as scalability. For parallel programs a limiting factor is defined by Amdahl's law. It takes into account the fact, that a certain amount of work of your code is done in parallel but the speedup is ultimately limited by the sequential part of the program. However, next to different amount of resources, we can also vary the problem size we want to solve - its behavior is described by Gustofson's law. In this introduction we want to give a you a brief overview about the two models and their consequences for running code on a cluster.

Strong Scaling

Amdahl's Law

We assume that the total execution time of a program is comprised of

- , a part of the code which can only run in serial

- , a part of the code which can be parallelized

- , parallel overheads due to, e.g. communication

The execution time of a serial code would then be

The time for a parallel code, where the work would be perfectly divided by processors, would be given by

is the speed up amount of time due to the usage of multiple CPUs. The total speedup is defined as the ratio of the sequential to the parallel runtime:

The efficiency is the speedup per processor, i.e.

Speedup and Efficiency

Knowing that , and writing as the fraction of the serial code, we can rewrite this to

This places an upper limit on the strong scalability i.e. how quickly can we solve a problem of fixed size by increasing . It is known as Amdahl's Law.

Consequences

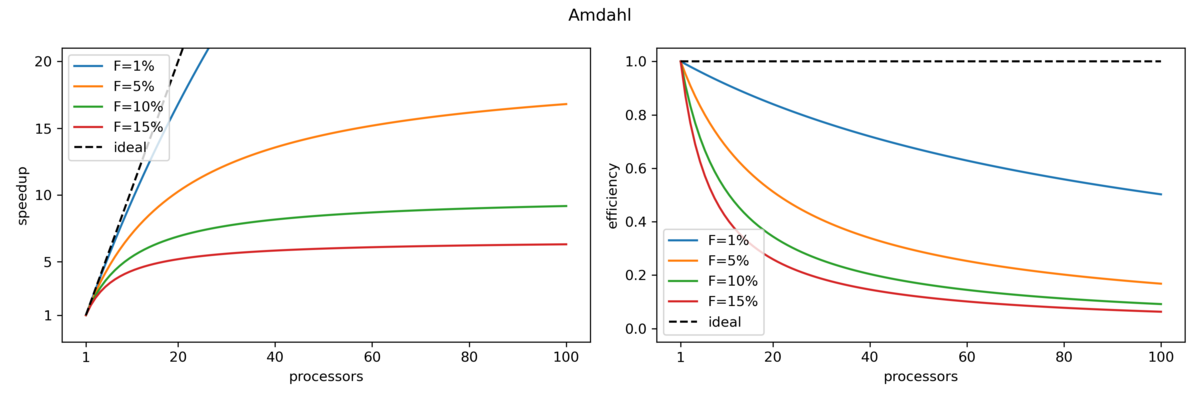

The figure below shows the upper limits for speedup and efficiency (as derived above) for different fractions of serial code:

We can see that the speedup is never linear in P, therefore the efficiency is never 100%:

- Some Examples:

- For P=2 processors, to achieve E=0.9, you have to parallelize 89% of the code

- For P=10 processors, to achieve E=0.9, you have to parallelize 99% of the code

- For P=10 processors, to achieve E=0.5, you have to parallelize 89% of the code

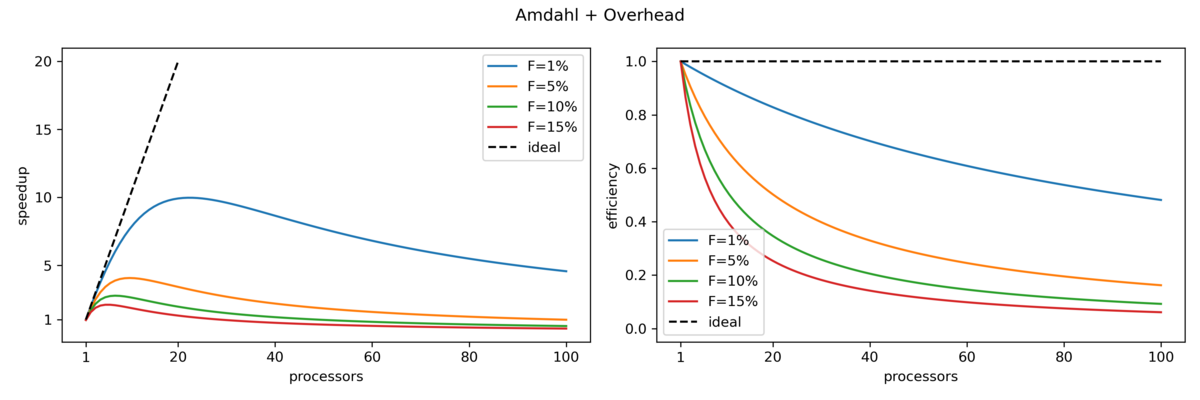

In the second figure terms for parallel overhead (e.g. communication between nodes) were added. This yields an even worse, but more realistic, behavior when increasing the number of processors:

Weak Scaling

Fortunately for us, the amount of resources scale well with the problem size. Therefore, instead of throwing large amounts of resources at small problem sizes, we can instead try to increase our problem size as we increase the amount of resources. This is referred to as weak scaling.

Gustafson's Law

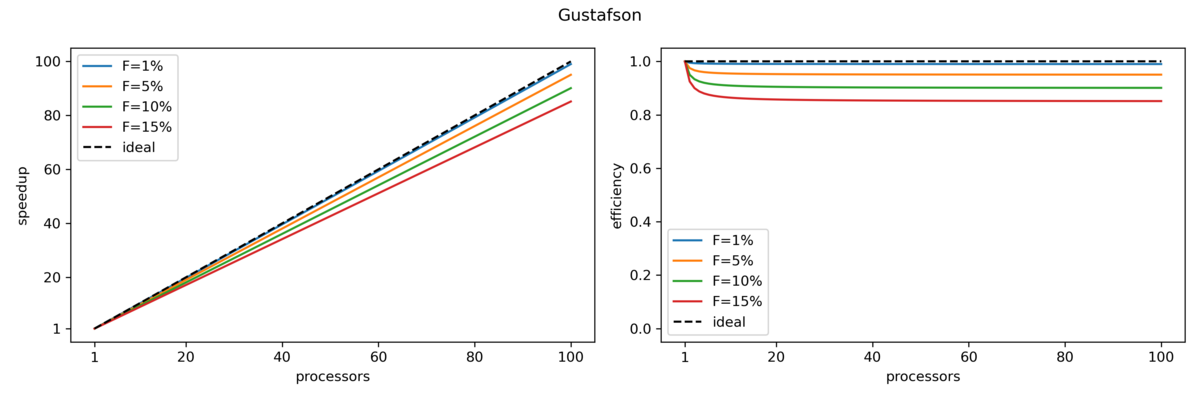

Gustofson's law states that the parallel part of a program scales linearly with the amount of resources while at the same time the serial part does not increase with increasing problem size. The speedup is here given by

where is the number of processes, is again the fraction of the serial part of the code and therefore the fraction of the parallel part. Here the speedup increases linearly with respect to the number of processors (with a slope smaller than one).

Speedup and Efficiency

Knowing about speedup and efficiency we can now try to measure this ourselves.

Next: Interactive Manual Benchmarking

Previous: Overview