Difference between revisions of "Benchmarking & Scaling Tutorial/Manual Benchmarking"

| Line 3: | Line 3: | ||

__TOC__ | __TOC__ | ||

| − | + | == Prepare your input == | |

It is always a good idea to create some test input for your simulation. If you have a specific system you want to benchmark for a production run make sure you limit the time the simulation is run for. If you also want to test some code given different input sizes of data, prepare those systems (or data-sets) beforehand. | It is always a good idea to create some test input for your simulation. If you have a specific system you want to benchmark for a production run make sure you limit the time the simulation is run for. If you also want to test some code given different input sizes of data, prepare those systems (or data-sets) beforehand. | ||

| Line 33: | Line 33: | ||

cd GROMACS_BENCHMARK_INPUT/ | cd GROMACS_BENCHMARK_INPUT/ | ||

| − | + | == Allocate an interactive session == | |

| − | |||

As a first measure you should allocate a single node on your cluster and start an interactive session, i.e. login on the node. Often there are dedicated partitions/queues named "express" or "testing" or the like, for exactly this purpose with a time limit of a few hours. For a cluster running SLURM this could for example look like: | As a first measure you should allocate a single node on your cluster and start an interactive session, i.e. login on the node. Often there are dedicated partitions/queues named "express" or "testing" or the like, for exactly this purpose with a time limit of a few hours. For a cluster running SLURM this could for example look like: | ||

| Line 42: | Line 41: | ||

This will allocate 1 node with 72 tasks on the ''express'' partition for 2 hours and ''log you in'' (i.e. start a new bash shell) on the allocated node. Adjust this according to your clusters provided resources! | This will allocate 1 node with 72 tasks on the ''express'' partition for 2 hours and ''log you in'' (i.e. start a new bash shell) on the allocated node. Adjust this according to your clusters provided resources! | ||

| − | + | == First test run == | |

| − | |||

Next, you want to navigate to your input data and load the environment module, which gives you access to GROMACS binaries. Since environment modules are organized differently on many cluster the steps to load the correct module might look different at your site. | Next, you want to navigate to your input data and load the environment module, which gives you access to GROMACS binaries. Since environment modules are organized differently on many cluster the steps to load the correct module might look different at your site. | ||

| Line 54: | Line 52: | ||

<code>mpirun -n 18</code> will spawn 18 processes calling the <code>gmx_mpi</code> executable. <code>mdrun -deffnm MD_5NM_WATER</code> tells GROMACS to run a molecular dynamics simulation and use the MD_5NM_WATER.tpr as its input. <code>-nsteps 10000</code> tells it to only run 10000 steps of the simulation instead of the default 500000. We also added the <code>-quiet</code> flag to suppress some general output as GROMACS is quiet ''chatty''. | <code>mpirun -n 18</code> will spawn 18 processes calling the <code>gmx_mpi</code> executable. <code>mdrun -deffnm MD_5NM_WATER</code> tells GROMACS to run a molecular dynamics simulation and use the MD_5NM_WATER.tpr as its input. <code>-nsteps 10000</code> tells it to only run 10000 steps of the simulation instead of the default 500000. We also added the <code>-quiet</code> flag to suppress some general output as GROMACS is quiet ''chatty''. | ||

| + | |||

| + | === Output === | ||

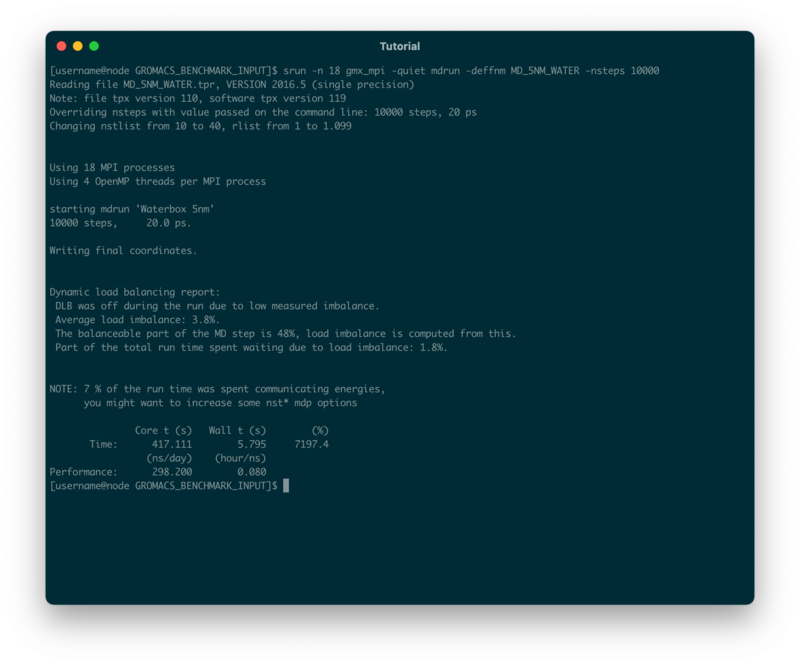

The simulation will presumably take a couple of seconds and the output will look similar to this: | The simulation will presumably take a couple of seconds and the output will look similar to this: | ||

| − | + | [[File:Benchmark_tutorial_gromacs_1.png|800px]] | |

| − | |||

| − | |||

| − | |||

| − | |||

| + | GROMACS gives out a lot of useful information about the simulation we just ran. First it tells us, how many MPI processes were started in total | ||

| − | Using 18 MPI processes | + | Using 18 MPI processes |

| − | + | We also get the information that for every MPI process we started, 4 OpenMP threads were created as well | |

| − | Using 4 OpenMP threads per MPI process | + | Using 4 OpenMP threads per MPI process |

| − | + | At the end of the output, we will be given some performance metrics: | |

| − | |||

| − | + | * Wall time (or elapsed real time): 7.145s | |

| + | * Core time (accumulated time of each core): 514.146s | ||

| + | * ns/day (nanoseconds one could simulate in 24h): 241.867 | ||

| + | * hours/ns (how many hours to simulate 1ns): 0.099 | ||

| + | * The total percentage of CPU usage (max = cores*100%): 7195.7% | ||

| − | + | == Second test run and monitoring with htop == | |

| − | |||

| − | |||

| − | |||

| − | |||

| + | The 18 MPI processes each using 4 OpenMP threads add up to 18x4 (72) cores being used - so all of the resources we allocated when we requested the interactive session. However, we do want a bit more control over how many cores are actually being used. Therefore we can either specify an environment variable to limit the number of OpenMP threads, i.e. | ||

| − | + | export OMP_NUM_THREADS=1 | |

| − | |||

| − | |||

| − | |||

| − | |||

| + | or we can directly tell GROMACS to only use 1 OpenMP thread per process by adding the flag | ||

| − | + | -ntomp 1 | |

| − | + | For the second run we want to test the adjusted parameters and also monitor the CPU cores in real-time. We can do this for example by opening a second shell on the same node. On most clusters it is allowed to SSH on to a node you currently have allocated resources on. To do this, open a new terminal window (or tab), ssh on to the cluster and then on to the node your interactive session is running on | |

| − | |||

| − | |||

| − | + | ssh cluster | |

| + | ssh node | ||

| − | + | On the node run the ''htop'' program by typing | |

| − | + | htop | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | + | You will see a list of all running processes on the node as well as | |

| − | |||

| − | |||

| − | |||

| − | |||

Revision as of 16:47, 21 September 2021

| Tutorial | |

|---|---|

| Title: | Benchmarking & Scaling |

| Provider: | HPC.NRW

|

| Contact: | tutorials@hpc.nrw |

| Type: | Online |

| Topic Area: | Performance Analysis |

| License: | CC-BY-SA |

| Syllabus

| |

| 1. Introduction & Theory | |

| 2. Interactive Manual Benchmarking | |

| 3. Automated Benchmarking using a Job Script | |

| 4. Automated Benchmarking using JUBE | |

| 5. Plotting & Interpreting Results | |

Prepare your input

It is always a good idea to create some test input for your simulation. If you have a specific system you want to benchmark for a production run make sure you limit the time the simulation is run for. If you also want to test some code given different input sizes of data, prepare those systems (or data-sets) beforehand.

For this tutorials purpose we are using the Molecular Dynamics Code GROMACS as this is a common simulation program and readily available on most clusters. We prepared three test systems with increasing amounts of water molecules which you can download from here:

- Download test input (tgz archive)

- SHA256 checksum:

d1677e755bf5feac025db6f427a929cbb2b881ee4b6e2ed13bda2b3c9a5dc8b0

The unpacked tar archive contains a folder with three binary input files (.tpr), which can directly be used as an input file for GROMACS. Those input files should be compatible with all GROMACS versions equal to or later than 2016.5:

| System | Sidelength of simulation box | No. of atoms | Simulation Type | Default simulation time |

|---|---|---|---|---|

| MD_5NM_WATER.tpr | 5nm | 12165 | NVE | 1ns (500000 steps à 2fs) |

| MD_10NM_WATER.tpr | 10nm | 98319 | NVE | 1ns (500000 steps à 2fs) |

| MD_15NM_WATER.tpr | 15nm | 325995 | NVE | 1ns (500000 steps à 2fs) |

Download the tar archive to your local machine and upload it to an appropriate directory on your cluster.

Unpack the archive and change into the newly created folder:

tar xfa gromacs_benchmark_input.tgz cd GROMACS_BENCHMARK_INPUT/

Allocate an interactive session

As a first measure you should allocate a single node on your cluster and start an interactive session, i.e. login on the node. Often there are dedicated partitions/queues named "express" or "testing" or the like, for exactly this purpose with a time limit of a few hours. For a cluster running SLURM this could for example look like:

srun -p express -N 1 -n 72 -t 02:00:00 --pty bash

This will allocate 1 node with 72 tasks on the express partition for 2 hours and log you in (i.e. start a new bash shell) on the allocated node. Adjust this according to your clusters provided resources!

First test run

Next, you want to navigate to your input data and load the environment module, which gives you access to GROMACS binaries. Since environment modules are organized differently on many cluster the steps to load the correct module might look different at your site.

module load GROMACS

We will make a first test run using the smallest system (5nm) and 18 cores to get a feeling for how long one simulation might take. Note that we are using the gmx_mpi executable which allows us to run the code on multiple compute nodes later. There might be a second binary available on your system just called "gmx", using a threadMPI parallelism, which is only suitable for shared memory systems (i.e. a single node).

mpirun -n 18 gmx_mpi -quiet mdrun -deffnm MD_5NM_WATER -nsteps 10000

mpirun -n 18 will spawn 18 processes calling the gmx_mpi executable. mdrun -deffnm MD_5NM_WATER tells GROMACS to run a molecular dynamics simulation and use the MD_5NM_WATER.tpr as its input. -nsteps 10000 tells it to only run 10000 steps of the simulation instead of the default 500000. We also added the -quiet flag to suppress some general output as GROMACS is quiet chatty.

Output

The simulation will presumably take a couple of seconds and the output will look similar to this:

GROMACS gives out a lot of useful information about the simulation we just ran. First it tells us, how many MPI processes were started in total

Using 18 MPI processes

We also get the information that for every MPI process we started, 4 OpenMP threads were created as well

Using 4 OpenMP threads per MPI process

At the end of the output, we will be given some performance metrics:

- Wall time (or elapsed real time): 7.145s

- Core time (accumulated time of each core): 514.146s

- ns/day (nanoseconds one could simulate in 24h): 241.867

- hours/ns (how many hours to simulate 1ns): 0.099

- The total percentage of CPU usage (max = cores*100%): 7195.7%

Second test run and monitoring with htop

The 18 MPI processes each using 4 OpenMP threads add up to 18x4 (72) cores being used - so all of the resources we allocated when we requested the interactive session. However, we do want a bit more control over how many cores are actually being used. Therefore we can either specify an environment variable to limit the number of OpenMP threads, i.e.

export OMP_NUM_THREADS=1

or we can directly tell GROMACS to only use 1 OpenMP thread per process by adding the flag

-ntomp 1

For the second run we want to test the adjusted parameters and also monitor the CPU cores in real-time. We can do this for example by opening a second shell on the same node. On most clusters it is allowed to SSH on to a node you currently have allocated resources on. To do this, open a new terminal window (or tab), ssh on to the cluster and then on to the node your interactive session is running on

ssh cluster ssh node

On the node run the htop program by typing

htop

You will see a list of all running processes on the node as well as

Next: Automated Benchmarking using a Job Script

Previous: Introduction and Theory